Last week, in part 1, I set the scene: why testing is at a crossroads, what autonomous testing means as a spectrum (not a switch) across both teams and in AI systems, and how autonomy functions best. I also discussed why autonomy within these AI systems is important, in relation to removing friction that stops people from doing their best work. Early signs of higher-level autonomy already exist in coding agents and tools, signalling a near future where AI can also accelerate our investigation, amplify Quality Engineering, and reshape how we test more effectively and efficiently in general.

In part 2, I will get into what I think is the most important and most overlooked part of this shift: that “quality” has never been about “correctness” alone, and AI is forcing the industry to finally confront that truth.

Quality Has Always Been Bigger Than “Correctness”

For years, some organisations have defined quality in the narrowest possible way:

“Does it meet the requirements?”

(even if at the same time, they’ve not been very good at defining requirements 😬)

Many companies have had a much wider, holistic view of quality though, that more aligns with how users think of quality. Over the past 10-15 years, I’ve been talking about this as 3 categories of quality:

- Goodness (relating to the grade of excellence of the product – which btw, is the dictionary definition of quality)

- Value (relating to the worth or usefulness of the product – which is a common definition from the testing communities: “value to someone who matters”)

- Correctness (relating to the product meeting explicit expectations that describe how it is and isn’t expected to operate – which is also how regulatory standards such as ISO tend to define quality).

This view of quality isn’t new… But one thing I’ve started to notice is that it’s becoming much, much more prominent across the software industry – especially when it comes to autonomous AI tools. It feels like an all-of-a-sudden realisation from many of the companies that had that narrower point of view on quality, that they are now seeing quality from these perspectives and asking about the state of quality from these perspectives when they talk about the output from AI tools.

I’ve heard Execs and Board members start to talk about quality from the perspective of “it looks correct, but is it good?”, and “is it valuable and useful for the teams?”.

And this shift changes everything!

It means that Testing is no longer just about verifying expected behaviour for these people, but shifts towards investigating to obtain a deeper understanding of systems, observing behaviour and uncovering unknowns (the variables, the risks, different states, emergent properties, interaction mechanisms, beliefs, biases, safety, etc.

From within this context, does “autonomy” sound exciting?

If tools can take on the scripted, repetitive, deterministic checking in an autonomous way, then how much autonomy does that grant the Quality Engineers in finally being able to invest their time in doing the work that moves the needle, and actually enables the autonomous AI tools: the deep investigation, the behavioural analysis, the uncovering of insights that shape a better context for the AI, and also help to shape better products from that holistic lens of quality.

To add to this, there’s also that AiT philosophy potentially at play here with these autonomous tools too, with AI supporting the Quality Engineers in those investigation and analysis activities.

The Human Side – What This Means for Testers and QEs

Let me be direct with my viewpoint: Testers aren’t going anywhere.

The rise of autonomy in AI makes the role of a tester more important, not less! But the role does need to evolve to what I have been calling Quality Engineering for the past decade or so.

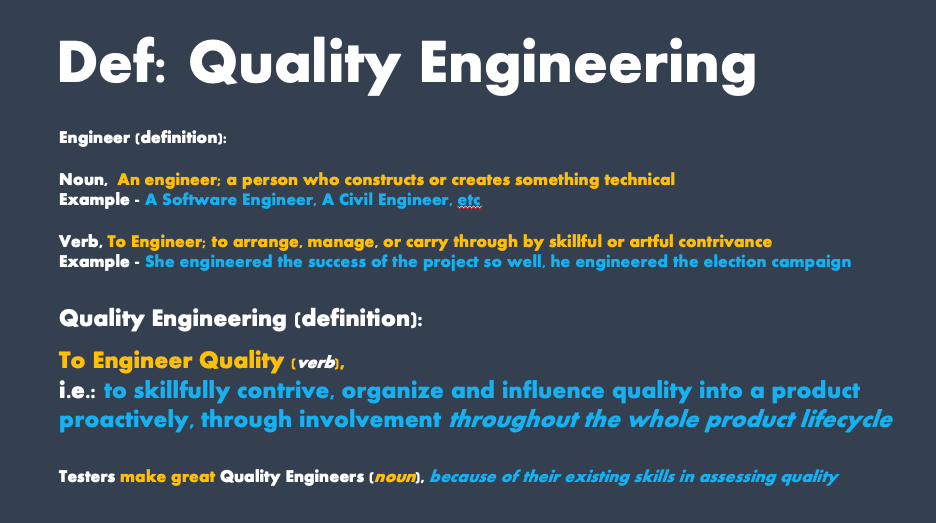

I define Quality Engineering as:

In this context of autonomous AI, Quality Engineers are:

- The curators of intent

- The designers of constraints and dependencies

- The interpreters of behaviour

- The strategists of risks

- The partners in shaping autonomous workflows

I know there is scepticism – I hear it in the communities, and some of it is of course justified: the quality of AI tools today is inconsistent, can be unpredictable, and occasionally infuriating if we miss certain context in a prompt. (side note: when building the impact-driven career framework web app, I failed to add context that admin changes to the mastery levels, role definitions, and the competencies and skills should be stored in a DB… It built the backend with this stuff baked into local storage 😬 – very frustrating).

One thing that this experience has really hammered home for me is the importance of integrity within our applications. When these tools generate code, workflows, or architectural ideas, they do so with what appears to be “absolute confidence”, even when they’ve quietly violated a constraint or made an assumption you never intended. And because the outputs often look coherent, it’s dangerously easy to miss where something fundamental has drifted. Integrity isn’t just “does it run ok?”, but is much more, related to the boundaries, the behaviour, the dependencies, the operability and understandability, the maintainability, the alignment to the value propositions we defined, etc.

Integrity is a huge part of quality, and I mentioned in part 1, and above too, about quality becoming more important, and actually much more prominent, in exec-level and board-level conversations – I’ve literally heard C-Level folks and board member folks talk about quality beyond correctness, talking about quality in terms of how some of the testing community have been talking about it for decades, and how I spoke about it at the top of this article – goodness, value, usefulness, worth, etc, along with correctness.

And this is where human insight (married into that AI testing context, working together at the speed of AI development) becomes essential,: the upstream aspect of investigating to discover context, risks, assumptions, dependencies, constraints, to feed into the AI systems, and also to help us to refine the requirements, design models, and value-based objectives, etc, to also feed into the AI systems… But also the downstream aspects in rigorously reviewing the outputs: spotting the subtle inconsistencies, the hidden coupling, the missing guardrails, the places where the system behaviour doesn’t match the story we’re telling, etc. Without this kind of scrutiny and support from people with the skills to do this kind of work (investigation skills, lateral and critical thinking skills, etc), autonomy becomes fragility, dressed up as progress.

To me, this is exactly why Quality Engineers are so valuable and needed right now! The investigative, critical and lateral thinking skills, and ability to understand how to evaluate behaviour, spot inconsistencies, and how to guide systems towards reliability is exactly what is needed in order for these systems to grow and evolve.

The nature of these tools currently mean that they’ll never be 100% accurate, so we’ll always need to have this level of rigour, utilising the skills and mindset of QEs across these systems… But as they start to improve and become more easily controllable, and more aligned to engineering workflows, then I also believe that it’s the QEs who embrace autonomous testing tools that will help to define the next era of AI in Quality Engineering.

The Future: A New Era of “AI in Quality Engineering”

In the current state, if you zoom out, AI can be an enabler: Autonomy within these AI products will assist our “checking” – i.e. assessing the correctness aspect of quality. From the full spectrum of: analysing requirements, analysing code, scraping through the product and features, building a bigger picture of the variables, risks, behaviours, etc. And it’ll automatically generate a bunch of test cases, then will generate automated checks, run them, then produce a report style output.

The QEs will be the perfect “human in the loop” across this spectrum, but will also be dramatically freed up to explore, investigate, uncover unknowns, think about the value and impact for users, guide the holistic system, from that much more holistic view of quality – all throughout the whole SDLC.

And in the future, I see a potential path forward to use AI more line with that “Automation in Testing” mindset: People will start using AI tools to also help them with exploring, investigating, uncovering risks and unknowns, and understanding the value and impact on users, from that holistic system perspective, across the scales of goodness, value and correctness.

And when that starts to happen, here’s the catch: the quality of our products will become much more dependent on the quality of our testing.

Autonomy within these tools AMPLIFIES that, and therefore gives QEs much more reach, visibility, trust and more agency.

And this in turn gives organisations a new level of confidence, which will align to how they’re currently evolving their thinking about quality (beyond correctness).

Which also in turn gives our entire industry a path forward at a time when it’s felt like it’s stuck, with cost cutting affecting engineering and testing roles en mass, and a time when complexity is outpacing capacity.

So… Testing isn’t just “improving”, it’s being redefined, with QEs at the helm.

How Quickly are These AI Products Evolving?

I’ve been in the tech industry for around 25 years now. I remember seeing a level of tool evolution in regards to automation tools… Increasing in capability, going through phases of record-and-playback, to script-driven automation again, and now AI-assisted workflows. But we’re right at the cusp of tools that are consuming information to build a picture of systems and behaviour, and can automate with a level of autonomy. We see it with coding tools, and with observability tools, and it’s also happening with test automation. So this shift isn’t really theoretical anymore, as it’s happening right now.

Over the past few weeks, I’ve had the privilege of diving deep into something that SmartBear is cooking up in this space, and it’s been incredible to see how fast they, and the general industry, is moving in this direction. Not just in terms of incremental improvements within existing products, but in actual genuine leaps with new products entirely, built around the things I’ve been saying above regarding autonomy at its core.

Having been fortunate enough to be granted some early access, the step change is real, and represents a new category of capability in supporting QEs.

No spoilers from me in this post about what they’re cooking, but you don’t have to wait long! SmartBear is going to unveil their vision of a future of testing enhanced by AI. Something I’m sure you’ll definitely want to pay attention to! 👀

To end this post, I’ll leave you with my final thoughts: Companies are shifting their views on what quality is, caused by the increased adoption of AI in Engineering workflows. With that, their expectations are evolving, and so is the role of a Quality Engineer right alongside it. Exciting times for some, and there will still be apprehension from others, but I don’t think we’ll need to wait too long to see how things evolve, as new AI products in this space are arriving imminently.

As always, I’d love to hear your thoughts in the comments!

This article is supported by SmartBear. They provided me with early access to their new product, so that I could play with it and share my experience. Don’t miss SmartBear’s upcoming livestream event on March 18, to hear their industry-changing announcement!